Research Techniques and Methods for studying Human Information Behavior on the Web

Learn about how user research techniques are relevant in the web development, IA and UX fields. Read these summaries and reflections on part 4 in Donald Case's book on Human Information Behavior (HIB).

Chapter 8: the Research Process

Abstract:

Chapter 8 discusses the nuts and bolts of designing research. It is a tad academic, but its argument is compelling. Case asserts that one should use techniques for measuring and observing to minimize human interpretative error and allow us to compare results across a common foundation.

He notes common sources of human error:

- poor observational skills

- tendency to overgeneralize from small samples

- tendency to make up information to support our beliefs

- ego and defensiveness

- prejudice

- tendency to assume we can't explain complex issues

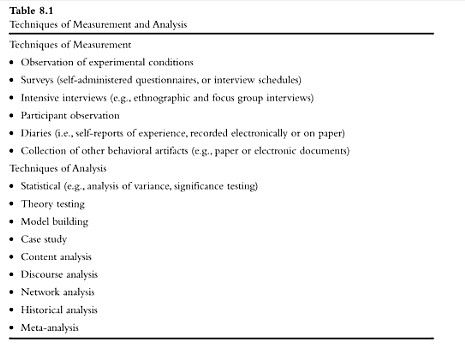

His table on page 177 gives a helpful overview of techniques for measurement (collecting data), as opposed to those for analysis of data.

Next, Case outlines the 5 stages in the research process:

- Conceptualization: where research questions are formed

- Study design: a plan for exploring the question via research, involves defining the data sought

- Technique application: if possible, multiple sources of data is more convincing*

- Analysis & Interpretation of data collected

- Summarization & Conclusions

Case briefly explains the difference between the inductive and deductive approaches, most researchers use both.

- Inductive starts with a specific case and attempts to create a general principle or theory.

- Deductive starts with a general theory or principle and tests it scientifically.

For those testing a deductive theory, it is particularly important to: define their research problem and hypotheses, operationationalize their objects, and create an observational definition to guide the study's observation.

Regardless of your approach, its believability will largely rest on its validity and reliability. It is valid if your procedures for measurement are accurate representations of what you're studying. It is reliable if you get the same results when the study is repeated. It's hard to be both highly reliable and highly valid. Much of the argument that favors quantitative (number-based) research is questionable valid because of its artificial, lab conditions. On the other hand, a qualitative bit of research can be hard to repeat.

Before selecting a method to use in research, one should consider your study's:

- Purpose: is your goal to:

- explore

- describe

- explain?

- Units of analysis: what will you be observing?

- people (either individually or in groups) or

- artifacts (objects or events)

- Time: when will you do your research?

- cross-sectional: all your research is at one point in time

- longitudinal: your measurements will be made over several points in time

Finally, with a brief note about the difficulties and importance of following some of these in an internet setting, Case gives 4 general ethical guidelines for research studies:

- No harm should come to participants in a study

- Study participants should not be deceived or misled in any way

- Participation in an investigation should be voluntary

- Any data collected about Individuals should be confidential

Review:

In general, I tend to pursue inductive methods. A usability study, for instance, is likely to take a few specific people attempting tasks on one website and attempt to generalize or explain any problems they have. As Case recommends, though, I often combine methods - using Morae to capture quantitative data such as completion time and rate, but using qualitative comments to help in the analysis phase. In usability, the longitudinal time consideration is particularly important: one must benchmark the before and after of any redesign to demonstrate ROI and gain institutional support for UX and IA design work.

Chapter 9: Method Types

Abstract: Case outlines and gives examples of the various methods used for HIB research, which include:

- case study

- lab experiments

- field experiments

- postal surveys

- email and web surveys

- brief interviews

- intensive interviews

- focus group interviews

- network analysis

- discourse analysis

- diaries and experience sampling

- historical analysis (unobtrusive)

- content analysis (unobtrusive)

- using multiple data sources in a single investigation

- meta-analysis

He notes that surveys (and interviews) are the most-used type of method in information science. I focused mainly on email/web survey methods, for which Case notes the following problems:

- difficulty of establishing relevance to users

- can seem impersonal

- can bias the sample towards those with internet access and technological skills

- technology can make calculating sample size and response rate difficult

- they don't capture the complexity and context that more observational/interview methods do.

On the bright side, however, email/web surveys don't require much typing and are easier to use/complete, which can increase the user response rate and lower the developer's time and effort.

Review:

Case's chapter is an excellent orientation to each method, but for a better sense of any particular method, one will need to look elsewhere. I, like Wilson (2007), also found it curious that "observation as a method is not covered...it is a standard ethnographic method and, perhaps, ought to be more used in field investigations."

For those, like myself, who are interested in electronic surveys, I recommend Dillman's (2009) tailored design approach to online surveys, which focuses on increasing the benefits and decreasing the costs of responding for participants, as well as establishing trust. The summary tables on pages 35 and 38 are an excellent cheat sheet to this approach. Examples of how to operationalize these foci are:

- Establish trust by:

- getting sponsorship from a worthy organization

- revealing the importance of the survey's task in the opening page

- ensuring confidentiality by asking for few traditionally-identifying details about participants

- Reduce the participants’ cost by:

- using non-subordinating language

- including only essential questions (keep it short)

- organizing questions in order to increase interest in the beginning and promote overall ease in its use.

- Increase the survey’s benefits for participants by:

- offering a brief description of the survey's purpose on the opening page

- respectfully asking for help

- expressing appreciation both in advance and at the end of the survey

An excellent free, opensource software option - should you choose to roll your own online survey - is Limesurvey.

For some pertinent thoughts from practicioners of user research, I recommend:

- User research topic on UXmatters

- Methods topic on boxes and arrows

Bibliography

Case, D. (2008). Looking for information: a survey of research on information seeking, needs, and behaviors, 2nd Edition. Emerald Group Publishing Limited. ISBN: 978-0123694300.

Dillman, D. A., Smyth, J. D., & Christian, L. M. (2009). Internet, mail, and mixed mode surveys: The tailored design method. Hoboken, N.J.: Wiley & Sons. ISBN: 978-0471698685.